What Percentage of Faculty Adopt Oer After Being Asked to Review an Open Education Resource

Abstract

Although textbooks are a traditional component in many college education contexts, their increasing cost have led many students to forgo purchasing them and some faculty to seek substitutes. One such alternative is open educational resource (OER). This present study synthesizes results from xvi efficacy and xx perceptions studies involving 121,168 students or faculty that examine either (1) OER and educatee efficacy in college instruction settings or (two) the perceptions of college students and/or instructors who accept used OER. Results across these studies suggest students achieve the aforementioned or better learning outcomes when using OER while saving significant amounts of money. The results also betoken that the majority of faculty and students who have used OER had a positive experience and would exercise so once more.

Introduction

For better or worse, the textbook remains a staple in American education. The literature regarding the apply of textbooks and other curriculum materials is extensive and complex. Crawford and Snider (2000) debate that curriculum materials are a vital part of the educational enterprise, suggesting that the vast bulk of classroom didactics is centered on textbooks. In contrast, Slavin and Lake's (2008) synthesis of 87 mathematics curriculum studies indicates that instructional improvement had a larger impact on educatee functioning than the option of curriculum. Determining the efficacy of one fix of curriculum materials relative to another is often difficult. For example, the National Enquiry Council (2004) reviewed 698 peer-reviewed studies of nineteen unlike mathematics curriculum materials at the K-12 level (ages five–xviii) and institute that they were could not state which programs were most effective.

The lack of clarity regarding the overall efficacy of textbooks is compounded by the fact that many students do not or cannot access commercial textbooks (CT), particularly in higher education considering of their high cost. In many cases, students report not purchasing required CT and consequently underperforming academically (Florida Virtual Campus 2016). In addition to academic challenges, the high price of CT is part of a larger problem higher affordability connected with more one-half of higher students reporting some level of food insecurity (Broton and Goldrick-Rab 2018).

Consequently, contempo years take seen a dramatic increase in the use of open up educational resources (OER), which are defined as "pedagogy, learning, and enquiry resources that reside in the public domain or have been released under an intellectual belongings license that permits their free utilise and repurposing by others" (Hewlett 2017). OER provide a no-cost alternative to CT, and may provide several material benefits to students. For example, a pregnant number of students who used OER reporting spending the money saved to purchase groceries (Ikahihifo et al. 2017). OER advocates contend that replacing CT with OER will do good students financially and may improve their academic performance.

Two central qualities faculty consider when selecting learning materials for their students are proven efficacy and trusted quality (Allen and Seaman 2014). Some have a perception that free textbooks equal lower quality textbooks and therefore lower learning outcomes (Kahle 2008), thus research has been performed to examine the quality and efficacy of OER. To examine enquiry continued with these criteria, Hilton (2016) synthesized research regarding the human relationship betwixt OER use and student performance (proven efficacy) equally well every bit pupil and faculty perceptions of OER (trusted quality). He identified a full of sixteen OER efficacy and perceptions studies had been published between 2002 (the year the term "Open Educational Resources" was coined) and August, 2015. I adjacent review key findings from this study.

OER efficacy and perceptions enquiry in higher education between 2002 and 2015

Of the sixteen manufactures identified by Hilton (2016), nine investigated the relationship between OER and learning outcomes, providing a collective 46,149 educatee participants. But one of these nine studies indicated OER utilise was associated with lower learning outcomes at a higher rate than with positive outcomes, and fifty-fifty this study found that in full general, the use of OER resulted in non-significant differences. Iii of the nine studies had results that significantly favored OER over traditional textbooks, some other three revealed no meaning difference and two did not discuss the statistical significance of their findings.

One claiming with these seemingly positive findings is that several of the studies have serious methodological issues. For example, Feldstein et al. (2012) compares courses that use OER with different courses that are not using OER. This introduces so many confounding variables that it is questionable whether any differences in the course outcomes are attributable to OER. Similarly, Pawlyshyn et al. (2013) reported dramatic improvement when OER was adopted; all the same, the OER adoption came simultaneously with flipped classrooms, making it difficult to correlate irresolute efficacy and OER. These critiques have been raised by other researchers (Gurung 2017; Griggs and Jackson 2017).

With respect to student and faculty perceptions, Hilton (2016) synthesized the perceptions of 4510 students and faculty members surveyed across ix separate studies. Not once did students or kinesthesia land that OER were less likely than commercial textbooks to aid student learning. Overall, roughly half of students and faculty noted OER to be coordinating to traditional resources, a sizeable minority considered them to be superior, and a much smaller minority establish them to be inferior.

The nowadays study continues where Hilton (2016) left off, by identifying, analyzing, and synthesizing the results of every efficacy and perceptions study published betwixt September 2015 and December 2018. The research questions are as follows:

- 1.

Has at that place been an increase in the number of published research studies on the perceptions and efficacy of OER?

- 2.

What are the collective findings regarding the efficacy of OER in higher pedagogy between September 2015 and December 2018?

- 3.

What are the collective findings regarding pupil and faculty perceptions of OER in higher education between September 2015 and Dec 2018?

- four.

What are the aggregated findings regarding OER efficacy and perceptions between 2002 and 2018?

Method

The methodology in the present written report largely follows that utilized by Hilton (2016). V criteria were used to make up one's mind inclusion in this inquiry synthesis. Get-go, OER were the primary learning resource used in a higher education setting and had some type of comparison fabricated between them and CT. Second, the inquiry was published by a peer-reviewed journal, part of an institutional research study, or a graduate thesis or dissertation. Tertiary, the study included results related to either educatee efficacy or faculty and/or student perceptions of OER. Fourth, the study had at to the lowest degree 50 participants. Finally, the study needed to have been written in English, and exist published between October of 2015 and December of 2018. Articles that were published online in 2018 merely function of 2019 journal publications were non included.

Potential manufactures to exist included in the synthesis were identified for inclusion based on the post-obit 3 approaches. Every bit demonstrated by Harzing and Alakangas (2016), Google Scholar provides more comprehensive coverage than similar database such as Web of Science or Scopus; therefore, I used Google Scholar to place every commodity that cited whatever of the sixteen studies included in Hilton (2016), and was published betwixt 2015 and 2018. This led to 788 potential articles, each of which was reviewed to verify whether it met the five criteria listed in the previous paragraph. I also searched Proquest Dissertations and Theses looking upwardly the key give-and-take "Open Educational Resources" between Oct 2015 and December 2018 which produced 314 results. These were likewise reviewed to determine whether they met the study parameters. Finally, I communicated with researchers who published on OER related topics regarding any additional studies they were aware of. The result of these approaches is the 29 studies discussed in the present written report.

Results

Number of published studies

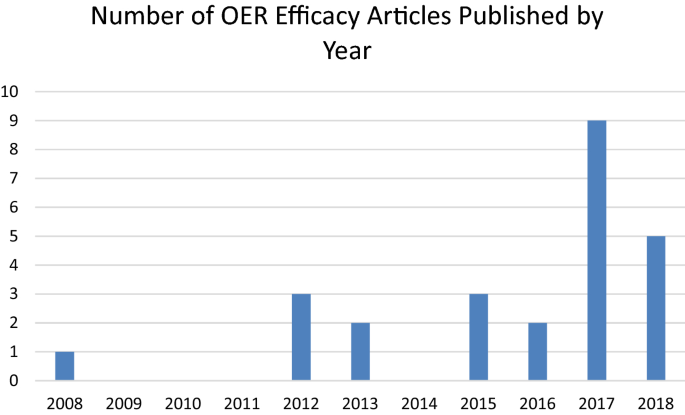

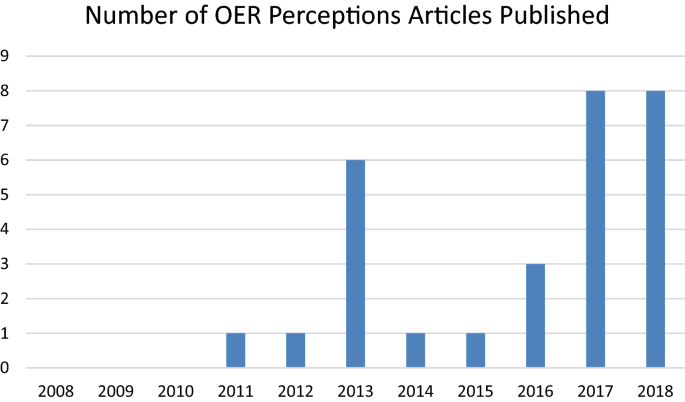

Between 2002 and Baronial of 2015, vii OER efficacy studies, 7 OER perceptions and two studies measuring both OER efficacy and perceptions were published (xvi full studies). Between September 2015 and December 31, 2018, an additional nine OER efficacy studies, thirteen OER perceptions and seven OER efficacy and perceptions studies were published (xx-nine total studies). This illustrates a rapid rising in research related to OER efficacy and perceptions with more published studies in the by 3 years than the previous xv. This rise is summarized in Figs. 1, two.

OER efficacy studies published 2008–2018

OER perceptions studies published 2008–2018

Collective findings from efficacy research betwixt 2015 and 2018

16 efficacy studies that met the aforementioned criteria were published between September 2015 and December 2018, containing a full of 114,419 students. The number of participants, in some respects, is deceptively large, as some of the studies [east.one thousand., Wiley et al. (2016) and Hilton et al. (2016)] contained large overall populations merely only a small portion of students who used OER. In total 27,710 students across these studies used OER and 86,709 used CT. The following paragraphs provide a brief overview of each of these OER efficacy studies, organized by how the study controlled for teacher and pupil differences.

No controls for teacher or student differences

Four studies did not make any endeavor to command for teacher or student differences. Essentially ii different methodologies were employed, each in two articles. Utilizing a methodology that compares student success metrics of students based on whether they utilise a CT or OER, Wiley et al. (2016) analyzed the rate at which students at Tidewater Community College dropped courses during the add/drop period at the start of a semester. They found that were students .viii% less probable to drop courses when utilizing OER. Although the divergence was pocket-size, it was statistically significant.

Hilton et al. (2016) followed Wiley et al. (2016) by reviewing two additional semesters of OER adoption at Tidewater Customs College. Their data set included those from Wiley et al. (2016) for a full of 45,237 students, 2014 of whom used OER. They compared drop, withdrawal and passing rates based on whether students used OER or CT. They found that when combining drop, withdrawal and passing rates, students who used OER were about 6% more probable to consummate the class with credit than their peers who did not use OER (vi.6% in the face up to face up courses and 5.vi% in online courses).

A carve up methodology examined faculty reports of OER implementation. Croteau (2017) examined xx-four datasets involving 3847 college students in Georgia who used OER. These datasets came from faculty members reporting on the results they obtained from this OER adoption. Unfortunately, the information was inconsistent—some faculty provided completion rates, while others reported on grade distributions or other metrics. In total, instructors provided pre/post efficacy measures for 27 courses. Beyond the faculty reports, there were "twenty-four information sets for DFW [drop, failure, withdrawal] rates, eight data sets for completion rate, fourteen data sets for class distribution, three data sets reported for terminal examination grades, 3 data sets reported for form specific assessment and i data set reported for final grades" (p. 97). While results varied across sections (e.1000., with respect to DFW data, 11 sections favored CT, 12 sections favored OER, i unchanged) across each of these metrics there were no overall statistical differences in results when comparison pre and post OER.

Similar to Croteau (2017), Ozdemir and Hendricks (2017) examined the reports of multiple faculty who had adopted OER. In total, 28 faculty provided some type of evaluation regarding the impact of adopting an open textbook on student learning outcomes; nonetheless, their metrics varied widely, and Ozdemir and Hendricks did not report the full number of students involved (clearly it was more than 50; however because information technology was non specified I have put "not provided" in Tabular array 1). Twenty instructors reported that learning outcomes had improved because of using open textbooks, and eight said that in that location was no departure. Of the twenty who said that learning outcomes had improved, nine measured provided data such as improved scores on exams or assignments, or improved course grades overall. Eight provided no data or explanation to support their claims that student learning had improved, and iii simply provided anecdotal testify. Fourteen instructors described student retention in their reports; eight said student retention improved with six stating that it remained the aforementioned. Like Croteau (2017), this study provides a valuable synthesis of instructor cocky-reports on the outcomes of using OER in their classes; however, as noted by the authors, there was little rigor or control in the teacher reporting process, limiting the value of the overall written report.

Studies that accounted for teacher, but not pupil variables

V studies accounted for teacher, simply not student variables. The design of each written report was like in that the same kinesthesia fellow member taught the identical course (to control for teacher variables), in some instances with OER and others with a CT. Researchers used student efficacy outcomes as the dependent variables in these studies. Chiorescu (2017) examined the results of 606 students talking college algebra at a higher in Georgia across four semesters. In spring 2014, autumn 2014, and autumn 2015 Chiorescu used a math CT, coupled with MyMathLab (an online software math supplement). In leap 2014 she used an OER textbook, coupled with WebAssign, a unlike online math supplement. Chiorescu found statistically significant differences in the percentages of students earning a C or better in the course between bound 2014 and leap 2015, favoring the use of OER. Similar results were noted between spring 2015 (OER) and fall 2015 (non-OER); nonetheless, there were no pregnant differences betwixt jump 2015 (OER) and fall 2014 (non-OER). She as well found that students were statistically more likely to receive an A when using OER and that students were approximately half equally likely to withdraw from the course when using OER (besides a statistically significant finding). In this study, unlike many others, the instructor went back to a CT later on using OER, given that she found the online math component aligned to OER to exist inferior to the online math component used in connection with the CT. She institute that both grades and withdrawal rates that improved during the semester in which OER were used, regressed to their previous levels when OER stopped beingness utilized in the grade.

Using a similar design, Hendricks et al. (2017) examined the academic performance of students in an introductory physics course at the University of British Columbia. They compared the results of students betwixt autumn 2012 and jump 2015 (students used CT) with students in fall 2015–spring 2016 (students used OER). Concurrent with the change in textbooks were significant pedagogical changes, although the teachers stayed the same. There were 811 students in the OER semesters with a non-specified amount (estimated to be 2400) in the CT semesters. The researchers found no significant differences when comparing form distributions; all the same, they found a small meaning improvement in final exam score when comparing fall 2015 (OER) with fall 2014 and fall 2013. They too compared student scores on the Colorado Learning Attitudes virtually Science Survey (Course) for Physics, a common diagnostic measurement in physics education. A one-way ANOVA indicates that at that place were no significant differences when all categories were combined; however, there was a small negative shift in the trouble-solving category during the year that OER were utilized.

Choi and Carpenter (2017) examined the academic results across five semesters of students taking a grade on Human Factors and Ergonomics with the same instructor. In two semesters students used CT (n= 114); in the other 3 they used OER (north= 175). Researchers measured differences in student learning based on midterm and final exam scores, equally well as overall grade class. Midterm exam grades fluctuated widely, with significant differences both before and after the introduction of OER, with the tendency towards lower scores post-OER. In one of the three OER semesters final exam scores were lower than they had been in the CT semesters; there were no significant differences in the other two semesters. In terms of overall class grades, in that location were no significant differences.

Lawrence and Lester (2018) used a like blueprint in an introductory American Government class, comparing ii teachers who used CT in fall 2014 with these same teachers who used OER in spring 2015. Although the researchers do not specify the number of students in their study, based on the survey results they report, there were at least 162 students who took the course in fall of 2014 and 117 in spring of 2015. There were no statistically significant differences in course GPA average or DFW rates. Students who used OER did perform better for 1 of the ii teachers studied; however, the authors aspect this alter to policy changes regarding online classes. They conclude that their "findings exercise not support the notion that OERs represent a dramatic improvement over commercial texts, nor do they point that students perform substantially worse when using open content texts either" (p. 563).

Ross et al. (2018) studied the use of the OpenStax Sociology textbook in an introductory Sociology form at the University of Saskatchewan. One instructor taught a sociology course with a CT in the 2015–2016 school twelvemonth (north= 330), and then used an OpenStax textbook in the fall of 2016 (n= 404). The researchers found no significant differences in grade grades between the two groups. However, students using CT had a completion rate of fourscore.3%, whereas students using OER completed at a rate of 85.3%, a statistically significant difference.

Studies that accounted for pupil, but not instructor variables

Three studies deemed for pupil, simply non teacher differences. In each example, the study performed statistical analyses that controlled for pupil variables such as income, GPA, mother'due south pedagogy, and/or Human action scores. Multiple teachers were involved in each study and at that place were no attempts to command for teacher variables. Westermann Juárez and Muggli (2017) examined the results of start year students enrolled in a mathematics class at an institution of college instruction in Chile, notable in part for being the just study outside the Usa and Canada to meet the criteria for inclusion in the present report. Students were in three dissimilar groups; one used a CT (n= thirty), one used Kahn Academy videos (OER) (n= 35) and a third used an open up textbook (OER) (due north= 31). The researchers used propensity score matching to control for student historic period, family income and number of education years of the mother. When comparing student results using a CT versus Kahn Academy, the researchers found that students who used a CT had college grade omnipresence but scored lowered on the final exam. In contrast, those who used the open textbook scored lower on the concluding exam than students using a CT; there were no significant differences in form omnipresence. While there were differences between the instantiations of OER and CT in terms of attendance and final exam score, there were no differences betwixt CT and OER in terms of overall course score.

Grewe and Davis (2017) studied 146 students who attended Northern Virginia Community Higher. These students were enrolled in an online introductory history class in fall of 2013 (two sections used OER, two sections did non) or spring 2014 (three sections used OER, iii did not). The authors gave the total number of students but did not specify the number of students per section, for analysis purposes in Table 1, I take assumed equal numbers of students in each section. While the online courses were all created from the same master template, different teachers administered the courses and may accept had variations in how they responded in word forums, graded educatee work, etc. Researchers attempted to control for educatee differences past using prior pupil GPA as a covariate. They constitute "a moderately positive human relationship between taking an OER course and academic achievement" and that even after accounting for prior GPA that "enrollment in an OER course was…a significant predictor of pupil grade achievement" (northward.p).

Gurung (2017) sent a Qualtrics survey to course instructors at vii institutions who in plough forwarded it to their students. In the offset study reported in this article, 569 students from five institutions who used an electronic version of the NOBA Discovering Psychology OER responded to the survey. At the other ii institutions, 530 students who used hard copies of one of 2 different CT responded. The survey asked students to share their ACT scores, written report habits, use of the textbooks, behaviors demonstrated past their teachers, and then answer fifteen psychology questions drawn from the 2007 AP Psychology exam. When controlling for ACT scores, students who used OER scored 1.ii points (13%) lower than those who used CT.

Gurung noted potential limitations of his first study included the fact that the OER textbooks were electronic compared with the difficult-copy CT, and that the AP examination questions may accept been more than closely aligned to the CT. In the 2nd study, Gurung rectified these problems by including students who used both hard and electronic copies of CT as well as hard and electronic copies of the NOBA OER. He as well included x quiz questions from the NOBA test bank. In this second report, 1447 students at four schools who used the open NOBA textbook responded to the survey; 782 students at two schools who used a CT responded. All other procedures and survey questions mirrored the get-go study. When comparing total quiz scores, at that place was an overall significant issue of the book used, favoring those who used the CT. However, when only the NOBA test bank items were used there were no pregnant differences, indicating alignment may exist the reason for the difference betwixt groups. In addition, there were no statistically significant differences when comparing the quiz scores of those who used an electronic version of OER versus those who used electronic version of a CT. In that location were also differences in quiz results between the two dissimilar schools that used commercial textbooks (each of which used a different CT). Information technology may be that 1 CT was superior, or that the teaching at 1 institution was stronger, leading to the difference.

Studies that accounted for student and teacher variables

4 studies accounted for both student and teacher variables. Winitzky-Stephens and Pickavance (2017) assessed a large-scale OER adoption across 37 different courses in several general education subjects at Common salt Lake Community college. In full, in that location were 7588 students who used OER compared with 26,538 students who used commercial materials. The researchers used multilevel modeling to control for course discipline, course level, individual instructors, and student backgrounds (including age, gender, race, new/continuing pupil, and prior GPA). After accounting for these variables, they found that for continuing students, the use of OER was not a significant factor in student class, laissez passer rate or withdrawal rate. For new students, OER had a slight, positive impact on course grade, but not for laissez passer or withdrawal rates.

Colvard et al. (2018) performed a like large-calibration analysis by examining course-level faculty adoption of OER at the Academy of Georgia. They evaluated viii undergraduate courses that switched from CT to OpenStax OER textbooks between fall 2010 and fall 2016. In contrast to Winitzky-Stephens and Pickavance (2017), who used statistical controls to account for teacher variables, Colvard et al. (2018) simply included sections where instructors had taught with both textbook versions. Researchers found statistically significant differences in course distributions favoring OER. There was a 5.v% increase in A grades after OER adoption, a vii.vii% increase in A-grades, and those receiving a D, F or West class decreased by 2.seven%. This written report was likewise the first to specifically examine the interactions among different student populations. Researchers found an overall GPA increase of 6.90% increase for non-Pell recipients and an 11.0% increase for Pell recipients. Furthermore, OER adoption resulted in a 2.1% reduction in DFW grades for non-Pell eligible students versus a 4.4% reduction for Pell-eligible students, indicating that the OER effect was stronger for these students with greater financial needs. Non-white students similarly received higher course boosts and decreased likelihood for withdrawals than did white students, although both groups showed better outcomes when using OER. The largest differential between educatee groups came in the comparing betwixt total and function time students. Form grades improved by three.ii% for full-time students but jumped 28.1% for office-time students. The DFW rate for full-time students really increased from 6.three to seven.iv%; however, the rate for function-time students dramatically dropped from 34.3 to 24.ii%. One limitation of their approach was that results were only reported at an aggregate level because Pell eligibility data was only given to the researchers in aggregate (not by course or instructor level). This was a stipulation from the Financial Assist office in lodge to prevent any students from possibly being identified. While this is a reasonable limitation, it is possible that reporting results in aggregate masked or created differences that would not have been present had results been disaggregated.

Jhangiani et al. (2018) attempted to command for both student variables (through demographic analysis and a pretest) and instructor variables (by using the aforementioned instructors) in their exam of vii sections of an introductory psychology grade taught in Canada. Ii sections were assigned digital OER, 2 were assigned the same OER, but in hardcopy format, and three were assigned a CT. Three different instructors taught the seven courses; 1 instructor taught back-to-back semesters, first with the print OER, then with the print CT. The other two instructors taught with either open or commercial, but not both. Students in all atmospheric condition had similar demographic variables and had equivalent knowledge of psychology at the commencement of the semester. Those using CT had completed more college credits, were taking fewer concurrent courses, and reported spending more time studying than those who used OER. Collectively, these indicators suggest that the 2 groups are roughly equivalent, with any differences favoring those in the CT condition. Students took three exams, identical for each section. When all sections were analyzed in a MANOVA, students assigned the digital open up textbook performed significantly amend than those who used the commercial textbook on the third of the iii exams. There were no differences in the other two exams. When merely the two sections taught past the same teacher were analyzed (to control for teacher bias), students using OER outperformed students using CT on one examination and there were no significant differences on the other two.

Clinton (2018) used a similar approach to compare the overall class scores and withdrawals rates of students taking her introductory psychology classes beyond two semesters. She compared students 316 students using a CT in spring of 2016 with 204 students in who used the OpenStax Psychology textbook in fall of 2016. The demographic makeup of students, also as their self-reports on how they used the textbooks were similar. When accounting for differences in educatee high school GPAs there was no course impact continued with OER adoption. The number of students who withdrew during the OER semester was significantly lower than when CT were used, a divergence that did not appear to exist related to GPA.

A summary of the OER efficacy research published between September 2015 and December 31, 2018 is provided in Table 1.

Commonage findings from perceptions research betwixt 2015 and 2018

In that location were twenty OER perceptions studies published betwixt September 2015 and December 2018, involving 10,807 students and 379 faculty. Half dozen of these studies also included efficacy data, and thus were as well identified as efficacy studies in the previous section. I adjacent provide a brief overview of each of these perceptions studies, organized by 2 different types of studies. Fifteen of the twenty studies directly ask students to compare OER they take used with CT, and five compare student reports nearly the OER or CT they were currently using.

Studies examining directly comparisons between OER and CT

Pitt (2015) surveyed 127 educators who utilized OER, specifically materials from OpenStax College by putting a link to her survey in the OpenStax newsletter. Those who completed the survey had used ten different OpenStax textbooks. Sixty-four per centum of faculty members reported that using OER facilitated meeting diverse learners' needs and sixty-eight percent perceived greater student satisfaction with the learning experience when using OER.

Delimont et al. (2016) surveyed 524 learners and thirteen kinesthesia members across thirteen courses at Kansas State University regarding their experiences with both "open" and "alternative" resources (where alternative resource refer to free, merely copyrighted materials). When students evaluated the statement, "I prefer using the open/culling educational resource instead of buying a textbook for this course (1 = Strongly disagree, 7 = Strongly agree)" they rated it 5.7 (moderately concord). Twelve of the 13 kinesthesia members interviewed preferred instruction with OER and stated their perception that students learned better when using OER and culling resources every bit opposed to CT. When asked to charge per unit their feel with the open/alternative textbooks, faculty members rated it 6.five on a seven point calibration.

CA OER Council (2016) surveyed faculty and students at California community colleges and state universities who adopted OER in the autumn of 2015. Seven of the xvi surveyed faculty members felt that the OER were superior to CT they had used. Five faculty rated the OER as being equivalent to CT, with the remaining 4 rating it as worse. Faculty expressed concern regarding ancillary materials such as PowerPoints and exam banks. Of the 14 faculty members who responded to a question near the quality of ancillary materials, five felt the OER back up materials had sufficient quality, 3 were neutral, and six faculty felt the materials lacked sufficient quality. When students (n= 351) were asked if the OER used were better than traditional resources, 42% rated OER as ameliorate, 39% equally about the aforementioned, 11% equally worse than CT and 8% declined to answer. All students in the study wanted to employ OER textbooks in the futurity and stated they would recommend the use of OER to friends.

Illowsky et al. (2016) surveyed 325 students in California who used two versions of an open statistics textbook. The first survey (n= 231) asked students about an earlier version of the OER. Fifty per centum of students said if given the option between courses using OER or CT they would cull to take future classes that used OER; 32% had no preference, with the remaining nineteen% preferring to enroll in courses with a printed CT. Xx-five percent of students rated OER as better than CT, 62% as the same and 13% worse relative to CT. A 2nd survey (due north= 94) was given to students who used a later OpenStax version of the textbook with similar results.

As stated in the efficacy department, Ozdemir and Hendricks (2017) studied 51 e-portfolios written by faculty in the country of California who used OER. They written report, "The vast bulk of faculty also reported that the quality of the textbooks was as good or better than that of traditional textbooks" (p. 98). Moreover, 40 of the 51 portfolios contained faculty insights regarding students' attitudes towards the open up textbooks; only 15% of these e-portfolios reported any negative student comments.

Jung et al. (2017) surveyed 137 faculty members who used OpenStax textbooks. Sixty-two pct stated OpenStax textbooks had the same quality as traditional textbooks; 19% thought the quality was ameliorate, and 19% thought it was worse. Faculty were also specifically asked well-nigh the time they spent preparing the course subsequently adopting an OpenStax text. Seventy-two pct of faculty stated they spent the same amount of time preparing to teach a class using open up textbooks, 18% spent more and 10% spent less. Those who reported spending more fourth dimension were asked if the extra corporeality of preparation time was acceptable and 78% said it was.

Hendricks et al. (2017) surveyed 143 Physics students; 72% said the OER had the same quality as CT. An additional 21% said OER were better than CT and 7% said they were worse. Students were also asked to charge per unit their agreement with the post-obit argument: "I would accept preferred to purchase a traditional textbook for this grade rather than using the gratuitous online textbook." 64% of respondents disagreed, eighteen% were neutral, and xviii% agreed. The primary reason given for choosing OER was cost, and for choosing a traditional textbook was a preference for print materials.

Jhangiani and Jhangiani (2017) surveyed 320 college students in British Columbia, registered in courses with an open textbook. Students were asked to charge per unit the understanding with the question, "I would have preferred to purchase a traditional textbook for this course"; 41% strongly disagreed, with an additional 15% slightly disagreeing. Another 24% were neutral, with twenty% either slightly or strongly agreeing.

Cooney (2017) surveyed 67 and interviewed six students who were enrolled in a wellness psychology grade at New York City College of Engineering that used OER. Those interviewed had a favorable perspective of OER, commenting on both cost savings and convenience. Students who were surveyed conspicuously preferred OER to CT; 42% said they were much better, 39% somewhat ameliorate, 16% neutral, and just 3% somewhat or much worse.

Ikahihifo et al. (2017) analyzed survey responses from 206 community higher students in eleven courses that used OER. Students were asked, "On a scale of 1 (poor) to 5 (fantabulous), how would you rate the quality of the OER material versus a textbook?" (n.p). A bulk (55%) rated the OER as excellent relative to a CT. An boosted 25% rated OER as being slightly better. 15% considered the two to be equal; 5% considered the quality of the OER material to be less than that of a traditional textbook.

Watson et al. (2017) surveyed 1299 students at the University of Georgia who used the OpenStax biology textbook. A majority of students (64%) reported that the OpenStax book had approximately the same quality as traditional books and 22% said it had higher quality. Only 14% ranked it lower than traditional textbooks. The 2 about common things students mentioned in terms of why they liked the OpenStax book was the free toll and ease of access.

Hunsicker-Walburn et al. (2018) surveyed 90 students at a community college who reported that they had used OER in lieu of a traditional textbook. While petty detail was provided nearly the students, the courses, or the OER used, the results were similar to other studies in this genre. They found that 33% of these students said the quality of OER were ameliorate than traditional textbooks, 54% said they were the same with 12% stating OER were worse.

Abramovich and McBride (2018) studied results from 35 higher instructors and 662 students beyond 11 unlike courses and seven colleges. Each instructor replaced a CT with OER; students and instructors were surveyed to gauge their perceptions of the OER they used. In total, 86% of students rated OER either as useful or more than useful than materials used in their other courses. Only 6% of students stated that the open up textbooks rarely or never helped them meet their course objectives. Faculty were similarly positive about the educational value of OER; nearly every teacher rated the OER as being either equal (40%), a little more useful (23%) or much more useful (34%) than materials they had previously used. This left only 3% of instructors who felt that the OER were less useful than other materials.

Ross et al. (2018) surveyed 129 students their experiences using the OpenStax Sociology textbook. Forty-six per centum of students the OER were excellent relative to other textbooks, 27%, to a higher place average, nineteen% average, six% below boilerplate, and ii% very poor. Near students (83%) said they would not have preferred purchasing a CT. The three features of the OER that students most appreciated were no-cost, immediate access and the convenience/portability of the digital format.

Griffiths et al. (2018) performed the largest OER educatee perceptions to date as they surveyed 2350 students across 12 colleges in the The states. They asked students to compare the quality of the OER with the instructional materials they used in a typical class. Students responded as follows: OER were much lower quality (2%), slightly lower (five%), about the same (34%), slightly college (29%), much higher (30%). Although this seems like an extremely strong statement regarding the quality of OER, it is tempered past the fact that students had like patterns in how they rated other aspects of the class. For example, students who used OER were asked to rate the quality of teaching, compared to typical grade, and stated that the quality of teaching in the class that used OER was much lower (ii%), slightly lower (5%), well-nigh the same (36%), slightly higher (26%), and much higher (31%). Their overall grade rating of the OER class compared to typical classes followed a similar design. While information technology is possible that the use of OER was so significant that the difference in instructional materials led to higher student perceptions of the teacher and overall course, it is equally probable that uncommonly strong kinesthesia or courses colored their perceptions of the materials. It is also possible that students tend to take an overall positive experience in every class they take thus causing them to rate most classes as "improve" than a typical class, even though this is non mathematically possible.

Studies comparing ratings of OER and CT

Gurung (2017), used a short version of the Textbook Cess and Usage Scale (TAUS; Gurung and Martin 2011) to assess student perceptions of CT and OER. The TAUS is assesses different components of a textbook, such every bit study aids, visual appeal, examples, and then forth. Gurung (2017) asked students to charge per unit the current textbook they were using (some subjects used OER and others used CT) and compared the results. In his first report, Gurung found CT users rated the full quality of their textbook as college than those using an OER textbook. Further analyses showed this occurred because of differences in ratings on figures, photos and visual appeal. Students using OER rated the fabric as being more applicable to their lives. The results in Gurung's second study were similar; yet, additional details provided in the outset study (e.g., indicating whether the overall differences stemmed from differences in ratings solely on visual appeal) were not included with the second written report.

Jhangiani et al. (2018) also used a modified version of the TAUS to compare how 178 academy students in Canada rated the psychology textbooks that they used. Notably, three of the six questions they eliminated from the original TAUS to create their modified version were related to visual aspects of the materials. Some students used a impress CT, while others used print OER or digital OER. Unlike Gurung (2017), statistical analyses of student surveys found that students rated the OER print volume higher that the print CT on seven of the sixteen TAUS dimensions. There was no dimension where CT was higher rated than OER nor whatsoever significant differences between the CT and digital OER.

Lawrence and Lester (2018) surveyed students regarding their employ of Us History textbooks. Contrary to many OER enquiry studies, they plant that students were more than positive about the CT than the OER. Seventy-iv percent of the 162 students who used the traditional textbook said that they were "overall satisfied with the book" versus 57% of the 117 people who used the open, a departure of 17% (279 full survey respondents). The researchers attribute these results to problems related to the specific OER used and believe the results would have been different had a more than robust history OER textbook been available.

Clinton (2018) surveyed students in ii split semesters regarding their opinions of the textbook that they used (i semester used a CT, the other, OER, study described in greater particular in the efficacy section). She asked the two groups of students to answer specific questions about the book they used and then compared the two sets of responses. Across the 458 completed surveys, student perceptions of the quality of the two textbooks were similar except on two attributes. The CT was rated slightly higher (p= .06) in terms of visual appeal, whereas the OER was rated significantly higher with respect to the way information technology was written (p= .03).

Carpenter-Horning (2018) used the Cerebral Affective Psychomotor (CAP) Perceived Learning Scale to compare how students perceived their learning in a course depending on the textbook blazon used. She surveyed kickoff-year students at nine customs colleges, all of whom had taken a required outset-year seminar during the fall semester of 2016. Some of these classes used OER, others CT. In spring 2017 semester, these students (n = 5644) were surveyed regarding their feel in the form. A total of 227 students responded for a response rate of 4%. Of these, 101 used OER and 126 used CT in their course. An independent samples t test showed that students who used OER reported significantly higher levels of perceived cognitive learning in the course (p = .02, d= .31). A separate independent samples t-exam demonstrated no statistically significant mean differences perceptions of affective learning. While using of a pre-established musical instrument to analyze the perceptions of OER is commendable, the CAP Perceived Learning Scale is not designed to measure student textbook perceptions, but rather their overall learning. Thus, a weakness of this written report may exist an supposition that the difference in perceived learning in the courses is attributable to the blazon of textbooks; still, other factors may take influenced the departure in pupil perceptions of learning.

A summary of the OER perceptions research published between September 2015 and Dec 31, 2018 is provided in Table two.

Amass OER efficacy and perceptions enquiry between 2002 and 2018

By the cease of 2018, a full of 20-five peer-reviewed studies examining the efficacy of OER had been published. These studies involve 184,658 students, 41,480 who used OER and 143,178 who used CT. 3 studies did non provide results regarding statistical significance. Ten reported no significant differences or mixed results. Eleven had results that favored OER. 1 had results that favored CT, although the researcher this report stated these differences could chronicle to how the learning materials were aligned with the cess.

A consistent trend across this OER efficacy research (spanning from 2008 to 2018) is that OER does not impairment educatee learning. Although anecdotal reports that OER are non comparable to CT be, the research does non bear this out with respect to student learning. While the impact of OER on student learning appears to be small, it is positive. Given that students relieve substantial amounts of money when OER is utilized, this is a particularly of import blueprint.

In terms of perceptions, at the cease of 2018, twenty-9 studies of educatee and kinesthesia perceptions of OER have been published. These studies involve 13,302 students and 2643 faculty members. Every written report that has asked those who have used both OER and CT as master learning resource to directly compare the two has shown that a strong majority of participants study that OER are as good or better. In the five studies in which the ratings of students using CT were compared with the ratings of students who used OER, ii studies establish higher ratings for CT, two reported college ratings for OER and one showed similar ratings.

The key pattern of OER perceptions research is easy to identify—students do not like paying for textbooks and tend to capeesh free options. Many instructors appear to be sensitive to this pupil preference, which may influence their ratings of OER. The fact that consistent survey information show that both kinesthesia and students who utilise OER largely rate it every bit existence equal to or superior to CT has of import practical and policy implications for those responsible for choosing textbooks.

Discussion

The research base regarding the efficacy of OER is growing both in quantity and composure, but much more works remains to be done. Of the nine efficacy studies published prior to 2016, merely two controlled for student variables, with four controlling for teacher variables. Of the 13 efficacy studies published between 2016 and 2018, 7 controlled for student variables and nine controlled for instructor variables. This is significant comeback in research rigor, an encouraging trend peculiarly given that 3 of the 5 2018 efficacy studies controlled for both instructor and pupil variables, with the other 2 decision-making for instructor variables. Such controls are vital, given that seemingly significant differences can disappear when accounting for variables such as prior GPA (e.1000., Clinton 2018). To appointment, five of the twenty-two OER efficacy studies control for both student and teacher variables. These studies (Allen et al. 2015; Winitzky-Stephens and Pickavance 2017; Clinton 2018; Jhangiani et al. 2018; Colvard et al. 2018) provide models for time to come OER efficacy studies.

Weaknesses mutual amidst many OER efficacy studies is that they rely on not-standard measurements of educatee learning. For example, GPA and final examination scores tin vary significantly when class requirements and final exams are changed—and both of these are oft concomitant with curriculum changes. Using standardized instruments (as did Hendricks et al. 2017), or exams that are held constant (every bit did Gurung 2017) can assist meliorate this weakness. Randomization continues to be challenging (as it is in much of education enquiry) with the simply two OER efficacy studies using randomization being published prior to 2013. Furthermore, only i study published prior to 2019 analyzes OER efficacy among diverse student populations (Colvard et al. 2018).

The limited research on specific student populations provides a specific management for futurity OER efficacy research. Moving forward, it volition be important to examine disaggregated data that focuses on a variety of specific populations. Does OER touch on low-income students more than than loftier income students? Community college students more than than university students? Which educatee populations appear to do good most (or least) from OER adoption?

Additional enquiry is also needed quantitative meta-analyses. The present study provides a qualitative synthesis of OER efficacy; yet, as the research corpus regarding OER increases, more sophisticated meta-analyses are needed to calculate effect sizes across studies. Another area where further research could exist helpful is to analyze whether specific OER are more efficacious than others and whether certain subjects are more than amenable to the use of OER than others.

With respect to OER perceptions, the number of published studies has doubled in just 3 years, with more than than four times the number of students surveyed. About of these studies ask those who have used both OER and commercial to compare the quality of one relative to the others. A consequent pattern has emerged of both students and faculty generally rating OER to be as good or improve than CT. Nevertheless, there are limitations to this finding. Equally stated, Griffiths et al. (2018) constitute that a stiff majority of students said OER were better than their CT. These aforementioned students said the quality of teaching in the class that used OER was much higher than that with CT. Presumably the use of OER is not directly correlated with better teaching, calling into question whether a halo issue or other confounds influenced student responses. It is besides important to note that student preference does not necessarily equal amend learning. Landrum et al. (2012) constitute that student quiz scores was not correlated with their ratings of different textbooks. At the aforementioned time, the perceptions of 13,173 students, to say nothing of 2643 faculty members cannot be taken lightly.

A recent development in OER perceptions research, commencement appearing in 2017, is giving the same instrument to students who use either OER or CT and comparing how students scored their respective books (Gurung 2017; Jhangiani et al. 2018; Lawrence and Lester 2018; Clinton 2018; Carpenter-Horning 2018). The results of these studies take been less definitive with two having results that favor OER, ii CT, and one no difference. Significantly, Gurung (2017) and Jhangiani et al. (2018) used like methodologies and instruments only came upward with dissimilar results. I caption may exist that Gurung (2017) establish differences that were entirely based on ratings of figures, photos and visual appeal and Jhangiani et al. (2018) excluded questions relating to visual components. Assuming the overall differences in student ratings of commercial and open textbooks on the TAUS stems from differences in the ratings of visual attributes, it would be interesting for futurity research to effort to quantify the financial value that students would place on these differences. In addition, no studies using this research blueprint have been done with kinesthesia members. Further evolution of these types of comparative studies may provide further insight as to student and faculty perceptions of OER. Some other potential management for OER perceptions inquiry is to give students and/or kinesthesia members selections from OER and CT and inquire them to rate them, both according to quality and relative value.

In addition to pointing towards time to come enquiry directions, the corpus of OER enquiry synthesized in the present written report has important implications for design and implementation strategies related to OER in education. Instructional designers, librarians, and others involved in helping faculty with curriculum materials can point them towards OER with greater confidence that students volition perform also at they would when using CT. It is too likely that these individuals will receive increasing questions from kinesthesia members regarding OER. Consequently, academic programs in instructional design and related fields my need to give greater emphasis to the agreement of OER, too every bit approaches to identifying and integrating OER into curriculum.

Conclusion

Based on the growing inquiry on the efficacy and perceptions of OER, policy makers and faculty may need to judiciously examine the rationale for obliging students to purchase CT when OER are bachelor, particularly in the absenteeism of the efficacy of a specific CT. Gurung (2017) notes, "It is an empirical question if a…13% departure in scores would justify the cost of a $150 book to an educator. Would a educatee from a low socioeconomic family unit background feel the same? This question of the real world implications of the finding is more relevant when 1 notes the low event sizes…[which] suggest this difference may not seem important to students burdened by high tuitions" (p. 244).

Given the fact that the xiii% divergence noted by Gurung disappears in his study when using items aligned to OER and is absent-minded in all other OER efficacy studies, the question becomes even more than pressing. To paraphrase Gurung, "Does no significant improvement in academic performance justify a $150 textbook?" While in that location certainly are significant limitations with many of the OER efficacy studies published to date, collectively, at that place is an emerging finding that utilizing OER simultaneously saves students money while non decreasing their learning. The facts that (1) more than than 95% of published research indicates OER does not lead to lower educatee learning outcomes, and (2) the vast majority of students and faculty who have used both OER and CT believe OER are of equal or higher quality make information technology increasingly challenging to justify the high price of textbooks.

References

References marked with an asterisk indicate studies included in the synthesis.

-

*Abramovich, Southward., & McBride, M. (2018). Open education resource and perceptions of financial value. The Internet and Higher Education, 39, 33–38.

-

Allen, E., & Seaman, J. (2014). Opening the curriculum: Open educational resources in US. Babson Park: Babson Survey Research Group.

-

Allen, G., Guzman-Alvarez, A., Molinaro, Grand., & Larsen, D. (2015). Assessing the bear on and efficacy of the open-admission ChemWiki textbook project. Retrieved August five, 2019 from Educause Learning Initiative Brief. Retrieved from https://library.educause.edu/resources/2015/1/assessing-the-impact-and-efficacy-of-the-openaccesschemwiki-textbook-project.

-

Broton, Grand. M., & Goldrick-Rab, South. (2018). Going without: An exploration of food and housing insecurity among undergraduates. Educational Researcher, 47(2), 121–133.

-

*California Open Educational Resources (2016). OER adoption study: Using open educational resources in the college classroom. California OER Quango. Retrieved April 1, 2016, from https://docs.google.com/certificate/d/1sHrLOWEiRs-fgzN1TZUlmjF36BLGnICNMbTZIP69WTA/edit.

-

*Carpenter-Horning, A. (2018). The effects of perceived learning on open sourced classrooms inside the customs colleges in the Southeastern region of the United States (Doctoral dissertation). Retrieved August 5, 2019 from https://digitalcommons.liberty.edu/cgi/viewcontent.cgi?article=2737&context=doctoral.

-

*Chiorescu, Grand. (2017). Exploring open educational resources for higher algebra. The International Review of Inquiry in Open up and Distributed Learning. https://doi.org/10.19173/irrodl.v18i4.3003.

-

*Choi, Y. Thousand., & Carpenter, C. (2017). Evaluating the bear on of open educational resources: A instance written report. Portal: Libraries and the Academy, 17(4), 685–693.

-

*Clinton, V. (2018). Savings without sacrifice: A case study on open up-source textbook adoption. Open Learning, 33(3), 177–189.

-

*Colvard, North. B., Watson, C. Eastward., & Park, H. (2018). The bear upon of open educational resources on various student success metrics. International Periodical of Teaching and Learning in Higher Education, xxx(2), 262–276.

-

*Cooney, C. (2017). What impacts exercise oer take on students? Students share their experiences with a wellness psychology OER at New York city college of technology. The International Review of Research in Open up and Distributed Learning. https://doi.org/10.19173/irrodl.v18i4.3111.

-

Crawford, D. B., & Snider, V. E. (2000). Effective mathematics instruction the importance of curriculum. Education and Treatment of Children, 23(2), 122–142.

-

*Croteau, East. (2017). Measures of educatee success with textbook transformations: The affordable learning Georgia initiative. Open up Praxis, 9(1), 93–108.

-

*Delimont, N., Turtle, East. C., Bennett, A., Adhikari, Chiliad., & Lindshield, B. L. (2016). University students and faculty have positive perceptions of open/culling resources and their utilization in a textbook replacement initiative. Research in Learning Applied science. https://doi.org/10.3402/rlt.v24.29920.

-

Feldstein, A., Martin, M., Hudson, A., Warren, K., Hilton, J., & Wiley, D. (2012). Open textbooks and increased student access and outcomes. European Journal of Open, Distance and E-Learning, fifteen(2), 1–nine.

-

Florida Virtual Campus (2016). 2016 Florida student textbook survey. Tallahassee, FL. Retrieved Baronial 5, 2019 from https://florida.theorangegrove.org/og/items/3a65c507-2510-42d7-814c-ffdefd394b6c/ane/.

-

*Grewe and Davis. (2017). The bear upon of enrollment in an OER course on pupil learning outcomes. The International Review of Research in Open and Distributed Learning. https://doi.org/x.19173/irrodl.v18i4.2986.

-

*Griffiths, R., Gardner, S., Lundh, P., Shear, L., Ball, A., Mislevy, J., et al. (2018). Participant experiences and financial impacts: Findings from yr two of achieving the dream'southward OER degree initiative. Menlo Park, CA: SRI International.

-

Griggs, R. A., & Jackson, S. L. (2017). Studying open versus traditional textbook effects on students' course operation: Confounds abound. Teaching of Psychology, 44(iv), 306–312. https://doi.org/10.1177/0098628317727641.

-

*Gurung, R. A. (2017). Predicting learning: Comparing an open up educational resource and standard textbooks. Scholarship of Teaching and Learning in Psychology, iii(3), 233–248.

-

Gurung, R. A., & Martin, R. C. (2011). Predicting textbook reading: The textbook assessment and usage scale. Teaching of Psychology, 38(1), 22–28.

-

Harzing, A. Westward., & Alakangas, S. (2016). Google scholar, scopus and the web of science: A longitudinal and cantankerous-disciplinary comparison. Scientometrics, 106(ii), 787–804.

-

*Hendricks, C., Reinsberg, S. A., & Rieger, G. W. (2017). The adoption of an open textbook in a large physics course: An analysis of cost, outcomes, use, and perceptions. The International Review of Research in Open and Distributed Learning. https://doi.org/10.19173/irrodl.v18i4.3006.

-

Hewlett (2017). Retrieved August 5, 2019 from http://www.hewlett.org/strategy/open-educational-resources/.

-

Hilton, J. (2016). Open educational resources and higher textbook choices: A review of inquiry on efficacy and perceptions. Educational Technology Enquiry and Development, 64(4), 573–590.

-

*Hilton, J., Fischer, L., Wiley, D., & Williams, L. (2016). Maintaining momentum toward graduation: OER and the class throughput rate. International Review of Research in Open up and Distance Learning, 17(6), nineteen–26.

-

*Hunsicker-Walburn, M., Guyot, W., Meier, R., Beavers, L., Stainbrook, G., & Schneweis, M. (2018). Students' perceptions of OER quality. Economic science & Business organization Journal: Inquiries & Perspectives, ix(1), 42–55.

-

*Ikahihifo, T. K., Spring, Yard. J., Rosecrans, J., & Watson, J. (2017). Assessing the savings from open educational resources on student bookish goals. The International Review of Research in Open and Distributed Learning, 18(7), 126–140.

-

*Illowsky, B. S., Hilton, J., 3, Whiting, J., & Ackerman, J. D. (2016). Examining student perception of an open statistics book. Open Praxis, 8(3), 265–276.

-

*Jhangiani, R. S., Dastur, F. Northward., Le Grand, R., & Penner, Grand. (2018). As good or better than commercial textbooks: Students' perceptions and outcomes from using open up digital and open print textbooks. The Canadian Journal for the Scholarship of Teaching and Learning, nine(1), 1–20.

-

*Jhangiani, R. South., & Jhangiani, S. (2017). Investigating the perceptions, utilize, and impact of open textbooks: A survey of postal service-secondary students in British Columbia. The International Review of Research in Open and Distributed Learning, eighteen(4), 172.

-

*Jung, E., Bauer, C., & Heaps, A. (2017). Higher education faculty perceptions of open up textbook adoption. The International Review of Research in Open and Distributed Learning, 18(4), 124–141.

-

Kahle, D. (2008). Designing open educational engineering science. In J. S. Chocolate-brown (Ed.), Opening up education: The commonage advancement of didactics through open up technology, open content, and open knowledge (pp. 27–45). Cambridge: MIT Press.

-

Landrum, R. E., Gurung, R. A., & Spann, N. (2012). Assessments of textbook usage and the relationship to student grade performance. College Teaching, lx(ane), 17–24.

-

*Lawrence, C., & Lester, J. (2018). Evaluating the effectiveness of adopting open up educational resources in an introductory American Government course. Periodical of Political Science Education, xiv(4), 555–566.

-

National Research Council, Confrey, J., & Stohl, V. (Eds.). (2004). On evaluating curricular effectiveness: Judging the quality of M-12 mathematics evaluations. Washington, DC: National Academies Printing.

-

*Ozdemir, O., & Hendricks, C. (2017). Teacher and educatee experiences with open textbooks, from the California open online library for educational activity (Cool4Ed). Journal of Computing in Higher Didactics, 29(1), 98–113.

-

Pawlyshyn, N., Braddlee, D., Casper, L., & Miller, H. (2013). Adopting OER: A example report of cross-institutional collaboration and innovation. Educause Review. Retrieved August 5, 2019 from https://er.educause.edu/articles/2013/11/adopting-oer-a-case-study-of-crossinstitutional-collaboration-and-innovation.

-

*Pitt, R. (2015). Mainstreaming open textbooks: educator perspectives on the affect of OpenStax college open textbooks. International Review of Research on Open and Distributed Learning. https://doi.org/10.19173/irrodl.v16i4.2381.

-

*Ross, H., Hendricks, C., & Mowat, Five. (2018). Open textbooks in an introductory sociology form in Canada: Educatee views and completion rates. Open Praxis, x(iv), 393–403.

-

Slavin, R. E., & Lake, C. (2008). Effective programs in uncomplicated mathematics: A best-evidence synthesis. Review of Educational Research, 78(3), 427–515.

-

*Watson, C. East., Domizi, D. P., & Clouser, Southward. A. (2017). Student and faculty perceptions of OpenStax in high enrollment courses. International Review of Research in Open and Distributed Learning. https://doi.org/10.19173/irrodl.v18i5.2462.

-

*Westermann Juárez, W., Muggli, J.I.V. (2017). Effectiveness of OER use in first-year higher educational activity students' mathematical course performance: A case report. In Adoption and Impact of OER in the Global South. Greatcoat Town & Ottawa: African Minds, International Development Research Centre & Inquiry on Open up Educational Resources for Development. http://doi.org/10.5281/zenodo.1094848.

-

*Wiley, D., Williams, L., DeMarte, D., & Hilton, J. (2016). The tidewater Z-degree and the INTRO model for sustaining OER adoption. Education Policy Analysis Archives, 24(41), 1–12.

-

*Winitzky-Stephens, J. R., & Pickavance, J. (2017). Open up educational resources and student course outcomes: A multilevel analysis. The International Review of Inquiry in Open and Distributed Learning, eighteen(iv), 12. https://doi.org/x.19173/irrodl.v18i4.3118.

Funding

This study was not funded past a grant.

Author information

Affiliations

Respective author

Ethics declarations

Conflict of interest

Hilton has received research grants from the William and Flora Hewlett Foundation; notwithstanding, these grants were non directly connected with the writing of this newspaper.

Additional information

Publisher'southward Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This commodity is distributed under the terms of the Artistic Commons Attribution four.0 International License (http://creativecommons.org/licenses/by/four.0/), which permits unrestricted use, distribution, and reproduction in any medium, provided you give appropriate credit to the original author(due south) and the source, provide a link to the Artistic Eatables license, and betoken if changes were made.

Reprints and Permissions

Virtually this article

Cite this article

Hilton, J. Open educational resources, pupil efficacy, and user perceptions: a synthesis of research published between 2015 and 2018. Education Tech Research Dev 68, 853–876 (2020). https://doi.org/10.1007/s11423-019-09700-four

-

Published:

-

Issue Date:

-

DOI : https://doi.org/ten.1007/s11423-019-09700-4

Keywords

- Open educational resource; OER

- Textbooks

- Computers in teaching

- Financing education

Source: https://link.springer.com/article/10.1007/s11423-019-09700-4

0 Response to "What Percentage of Faculty Adopt Oer After Being Asked to Review an Open Education Resource"

Enregistrer un commentaire